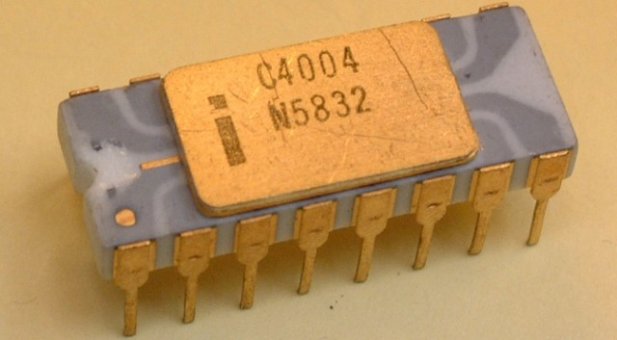

Designed by the fantastically-forenamed Federico Faggin, Ted Hoff, and Stanley Mazor, the 4004 was a 4-bit, 16-pin microprocessor that operated at a mighty 740KHz � and at roughly eight clock cycles per instruction cycle (fetch, decode, execute), that means the chip was capable of executing up to 92,600 instructions per second. We can�t find the original list price, but one source indicates that it cost around $5 to manufacture, or $26 in today�s money.

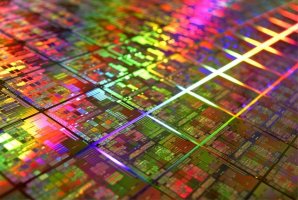

The 4004 used state-of-the-art Silicon Gate Technology (SGT) PMOS logic � a technique that Faggin perfected at Fairchild Semiconductor in 1968 � the world�s first metal-oxide-silicon (MOS) process. This breakthrough allowed the 4004 to have no less than 2,300 transistors and a feature size of 10 micron. By comparison, there are half a billion transistors in a Sandy Bridge chip, and each one is just 0.032 micron. Considering a human hair is around 100 micron, the 4004 was still rather impressive � but irrespective of feature size or transistor count, the fact that it was carved from a single piece of silicon is what made the 4004 truly spectacular. Faggin was so proud of his creation that he even signed the chip �FF�.

In real-world use, the 4004�s 92,600 instructions per second equated to the addition of two eight-digit numbers in 850 microseconds, or around 1,200 calculations per second. It�s perhaps not surprising that the first use of the 4004 was in the Japanese Busicom 141-PF calculator � and in fact, it was Busicom who originally asked Intel to create the 4004, as its in-house engineers needed 12 integrated circuits to make the 141 calculator work. Busicom actually owned the design of the 4004 and had exclusive rights to its use, but eventually agreed to let Intel sell the chip commercially � and thus the fateful appearance of that 1971 Electronic News ad.

Despite the 4004�s success � and the popularity of its 8-bit successors, 8008 and 8080 � Intel was still very much a DRAM and SRAM company at the time. It wasn�t until the late �70s with the 8088, which powered the IBM PC and its clones, that Intel decided to make the shift towards microprocessors, and as we now know, the rest is history.